Written: September 2022

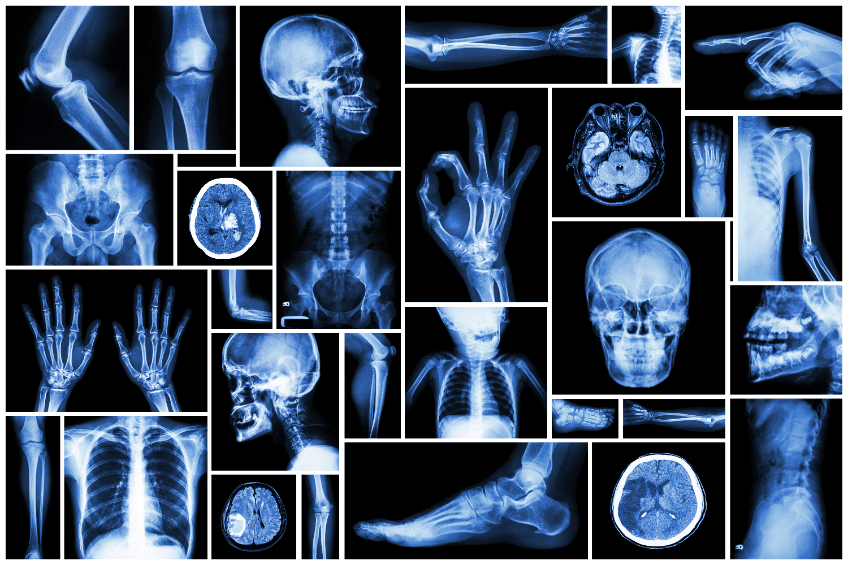

A frequently discussed question in healthcare today is about the impact artificial intelligence (AI) will have on radiology. Robert Schier, MD, a radiologist in Orinda, CA with Radnet, a leader in outpatient imaging, wrote an article in the Journal of the American College of Radiology titled “Artificial Intelligence and the Practice of Radiology: An Alternative View.” He said,

What we will see in radiology eventually are diagnostic image interpretation systems that have read every textbook and journal article; know all of a patient’s history, records, and laboratory reports; and have memorized millions of imaging studies. It may help to imagine these systems not as a collection of circuits in a console, but as an army of fellowship-trained radiologists with photographic memories, IQs of 500, and no need for food or sleep.

Schier argues AI will eventually allow computers to surpass human intelligence and writes computers will eventually replace humans the same as steam and gasoline engines replaced donkeys, horses, and oxen. Schier is not as optimistic about preserving radiology jobs as some of his colleagues in the industry. He said further,

The advent of computers that can accurately interpret diagnostic imaging studies will upend the practice of radiology. The two currently unanswered questions are just how much upending there will be and how long it will take to happen. There are vastly differing opinions, from the apocalyptic claim that AI will make all radiologists extinct to the delusional assertion that computers will always merely assist—and never replace—radiologists. Both extremes are mistaken, but the truth is in the direction of the first.

Evidence is mounting to support Schier’s point of view. Research scientists at Stanford University have developed a new AI algorithm called CheXNeXt which can reliably screen a chest X-ray in seconds for more than a dozen types of disease. The results are equal to or more accurate than the readings of radiologists.

CheXNeXt is trained to predict diseases based on X-ray images and highlight parts of an image which is most indicative of each predicted disease. The algorithm is trained based on a database from the National Institutes of Health (NIH) containing more than 110,000 frontal-view digitized chest X-rays of more than 30,000 patients. The diagnosis given by a radiologist for each X-ray image was tagged by an automated procedure to extract the diagnosis from the corresponding radiology report.

To make a comparison between humans and AI, the researchers selected 420 X-rays which contained 14 different diseases. Three radiologists reviewed the X-rays one at a time. Their conclusions were designated as a ground truth (fact). This ground truth was used to test how well the algorithm had learned the telltale signs of the 14 diseases.

Matthew Lungren, MD, MPH, Assistant Professor of Radiology at Stanford, said,

We treated the algorithm like it was a student; the NIH data set was the material we used to teach the student, and the 420 images were like the final exam. To further evaluate the performance of the algorithm compared with human experts, the scientists asked an additional nine radiologists from multiple institutions to also take the same final exam.

Reading the 420 X-rays took the radiologists about three hours. The algorithm scanned the X-rays and diagnosed all pathologies in about 90 seconds. For 10 diseases, the algorithm performed just as well as radiologists; for three, it underperformed compared with radiologists; and for one, the algorithm outdid the experts. Lungren summed up the research as follows,

We should be building AI algorithms to be as good or better than the gold standard of human, expert physicians. Now, I’m not expecting AI to replace radiologists any time soon, but we are not truly pushing the limits of this technology if we’re just aiming to enhance existing radiologist workflows. Instead, we need to be thinking about how far we can push these AI models to improve the lives of patients anywhere in the world.

Lungren’s last point is important. In the United States we have a significant resource of radiologists in our healthcare system. In other parts of the world such is not the case. As for his statement about not expecting AI to replace radiologists any time soon, it is debatable. Schier said, “If computers can do something now, they will only get better at it. If computers cannot do something now, they will probably learn how to.” His editorial commented on two different opinions about the future of AI and radiology. One is the “apocalyptic” assertion AI will replace radiologists. The other, which he calls the “delusional assertion”, is computers will always assist radiologists, but never replace them. His bottom line in the editorial is,

Today there are 34,000 radiologists in the United States. Unless radiologists do things other than interpret imaging studies, there will be need for far fewer of them. This is a complex situation filled with unknowns, and events are moving fast. We need to figure how to deal with this coming change. And we need to do it in a hurry.

The Barrier

There are many Medical AI research projects underway, but widespread adoption in hospitals and medical centers has not grown substantially. The barrier is not the technology. Shinjini Kundu, a medical researcher and physician at the University of Pittsburgh School of Medicine said, “The barrier is the trust aspect. You may have a technology that works, but how do you get humans to use it and rely on it?” The way AI is being applied in medicine is to absorb a very large amount of data, analyze it, find relationships, and make a diagnosis based on the learning. In effect, a lot of data goes into a “black box” where algorithms are applied, and out comes the diagnosis. This is quite different from how physicians diagnose. If they can’t see inside the black box, they will not trust the accuracy of the diagnosis.

Summary

We are at the early stages of applying AI to various fields of medicine. The more diagnoses made, the better the accuracy gets because of the cumulative learning the AI achieves. The pace of AI learning is increasing. The other encouraging news is AI researchers are working on techniques to open up the black boxes and share the methodology with physicians. This will likely result in important feedback from the physicians on how the algorithms can be improved. I believe ultimately the diagnosis by medical AI will surpass the accuracy of human physicians. Next week I will write about AI in cardiology.